Dr. Marcus Peck

Consultant in Anesthesia and Intensive Care Medicine

Point-of-Care Ultrasound Enters the AI Era: An Interview with Dr. Marcus Peck

Dr. Marcus Peck, an Intensive Care and Anesthesia consultant outside London, provides his expertise on the growing role of point-of-care ultrasound and artificial intelligence in medical diagnosis and training. Dr. Peck explains how ultrasound aids in real-time diagnosis of various organ failures and shock states. He envisions AI analysis taking over routine measurements and interpretations, freeing physicians to focus on critical thinking. Dr. Peck highlights how AI automation and cloud-based learning can expand access to quality POCUS training and oversight. He sees emerging AI-guided ultrasound systems like Kosmos enabling earlier diagnosis and interventions. According to Dr. Peck, integrating these technologies will lead to more timely, accurate care.

Watch the video here or read a lightly edited transcript of the interview below. Republished with the permission of Dr. Marcus Peck. This interview is edited for clairty and readability online.

Point-of-Care Ultrasound Enters the AI Era: An Interview with Dr. Marcus Peck

My name is Marcus Peck and I’m a consultant in intensive care and anesthesia at Friendly Park Hospital just outside London. I’m also the Co-Chair of FoCUS, the Focused Cardiac Ultrasound committee, the Physician Committee that’s run by the ICS. I’ve been involved since the beginning in 2010 when we were starting to talk about running POCUS accreditation here in the UK. I’ve been chairing the committee, first the vice committee the cardiac stuff and more latterly the Combined Committee for quite a long time now.

I use POCUS every day largely for diagnostic purposes. Looking for fluid and sticking needles into it to drain it is important. But for me it’s predominantly diagnostic. You can look at lungs and really know whether the problems are primarily a lung problem or a heart problem. It’s very important in shock. There are many reasons ultrasound helps you clinically with a shocked patient to instantly rule in to escalate diagnosis or rule out the problem. It aids diagnosis and helps look at whether it’s a left heart problem or a right heart problem because the treatments are different. Actually determining pure right heart failure is quite difficult clinically without ultrasound.

It’s also useful for other organ failures like renal failure, liver dysfunction, looking at the right side of the heart and congestion, which we’re increasingly aware of now. I find that really useful clinically. I work in intensive care diagnostics. I don’t think I could do my job without it.

POCUS is taking an increasing role in healthcare. We began this journey in 2010. Over 10 years later, the training program and access to POCUS training has increased linearly. We’re teaching 600 people a year now, probably more, which indicates the interest in this, at least in the Intensive Care world and I know in other specialties too. That’s driven by awareness, training, but also availability of machines. They’re very widespread now, particularly since COVID. So there’s really no excuse not to be able to do this stuff.

The next challenge is moving this further back into undergraduate medical training. We’re really interested in that and looking into it. POCUS is becoming mainstream everyday, which is great. I dream of the day when every frontline clinician has those skills.

When you’re a busy clinician working in the NHS, life is busy. Having access to the kit definitely makes it more used and integrated into care. Units that have multiple machines are lucky. But even just having to walk across the room to retrieve it, it’s all extra work which can be difficult to manage, particularly when running the floor. So having a machine that’s only our side and quick to boot up and gives you access, that access gives you the opportunity.

But I think it goes more than that. Having a machine you can really get to know inside out gives you detailed understanding. Having something you can have at home to really get to know will make it more accessible and efficient for treating more patients. I’m limited to the beds I’ve got, but it would give me more opportunity to use ultrasound if it’s easily accessible.

I work on the outreach team, the medical emergency response team. That’s where it comes into its own – when you’re leaving the ICU and going to the ward or emergency department with your own machine, you can whip it out whenever you need to. That’s clearly the future. The day we’ll have something as small as can be but as powerful as can be, that will really change the face of what we’re doing.

AI is really exciting to me and I think it’s exciting to everybody. Look at the effect GPT is having on the world and how incredible it seems. This field is full of excitement and usability that’s likely to impact ultrasound and POCUS in the coming years. It’s somewhat held back by regulators around the world, who are just trying to catch up with the technology to work out what it means. But the impact down the line is going to be immeasurable, like ChatGPT, which is people can be in awe of its production value, as good as humans can write, perhaps even better sometimes. That kind of ability will be realized in ultrasound and will be obvious to those of us who use it. It will be able to do things as good as a human expert, potentially even better.

The experts who do echocardiograms have historically been very worried about POCUS because most don’t record measurements that don’t fit their opinion from visual assessment. They exclude outliers and don’t record them because they don’t represent what they’re seeing. That bias probably makes echocardiograms better in their view. But the opposite is an inexperienced person who doesn’t recognize outliers and records and interprets them. The whole culture of POCUS has been to not measure.

But with AI driving interpretation with frame-by-frame analysis and pretty perfect measurements, we’ll find they’ll be as good as they can be. The reference value for A, the stuff the machines learn from is the ground truth of experts making interpretations. But we know experts aren’t as precise as we’d like to think – there is variability that AI will reduce, increasing precision and reducing variability. That will be a good thing.

The big thing will be when experts do their own measurements, then allow the machine learning measurements to happen. I imagine they’ll concur quite well. Once that happens, we’ll be able to trust and use it, then make massive leaps forward in access to more advanced ultrasound.

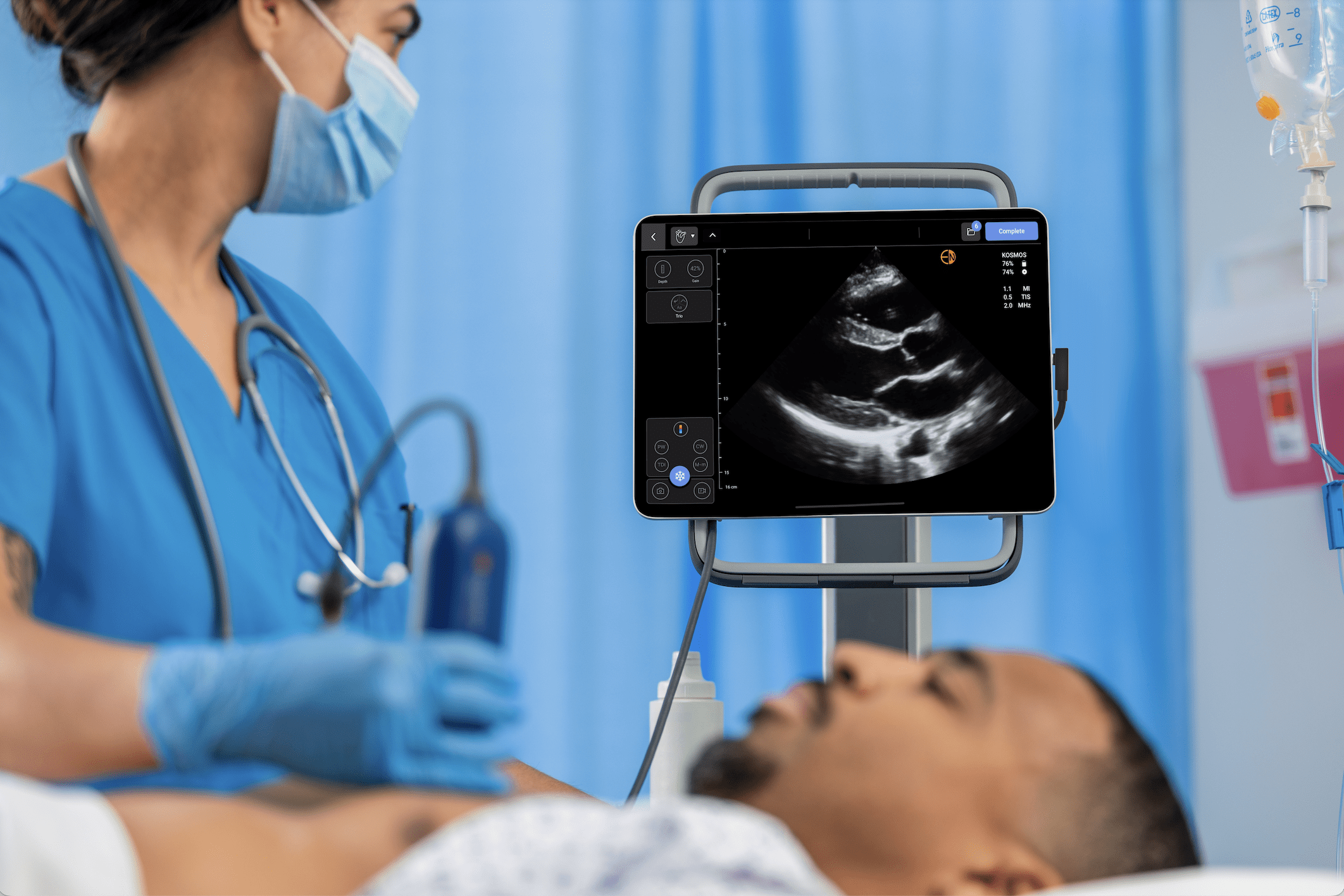

Look at the annotation function on Kosmos – it’s mind blowing, really quick. Anyone who does ultrasound of the heart knows that when you put the probe down, it takes a few seconds for your brain to orient itself and acquire the images you need. But the moment you put the probe down, labels start appearing before you know what you’re looking at, because the learning knows what it’s looking at quicker than you.

I’ll give you another example, it even beat me for a second. I was teaching somebody – we looked at different views and I said go back to the 4 chamber. The learner put the beam down and we had the image there. I looked and couldn’t understand why the RA and LA were mislabeled. I realized the probe was 180 degrees the wrong way and the machine had spotted it – it took me a couple seconds. When we have more experiences like that, adoption will be very rapid.

If this goes the way I think, we’ll be able to adopt things considered out of reach now – measurements, use of Doppler, and so on. The belief is we should have a very basic system or complete advanced provider training, with a massive gap in between. That gap is the most important part – what we need as intensivists and emergency physicians. We don’t necessarily need the highest level of experience, understanding and accreditation – that’s for specialists. But we need that middle part and AI will open that up to more people. Once we realize it’s accurate and deliverable, What I’ve seen on the education side of it and the ability of Kosmos and other machines to locate the views, it is incredible. As a trainer for a long time, I’d say I’m still on the learning curve with recognizing images and exactly how to advise somebody to change the probe but the AI and the software on these models is amazing and clear. I could easily imagine a feature here, semi trained people might be able to pick this probe up and be guided to the view with relatively minimal training and that opens up an exciting world.

The cloud allows us to break location from the person that acquires the image and the person that interpreting them or training. It’s difficult when we’re all busy clinically, but also busy at home. Being able to plug in and out to help train people not just in the room, but miles away will really help resourcing and make training and quality assurance deliverable. Where I work relies on goodwill and our time, mostly unfunded. If we can do more remotely, we’ll get where we need to be – the moment someone needs an expert opinion, they can just click a button and someone can plug in. This is really important for timely training and quality assurance.

The Kosmos has fantastic features that make it stand out. It’s ergonomically well designed, very easy to work through and the digital interface is very intuitive. But it’s real strengths are what it can do – it has spectral Doppler, in full it’s got all the modalities we need, that really makes a difference when you’re outside the unit away from your cart-based machine. The AI indicates how good the potential is for automatic interpretation. I really like that you can do all this without an ECG and it can tell you if you’re in systolic or diastolic from frame interpretation. Although I’m just starting with the cloud-based diagnostics from Us2.ai, it promises something really different from other machines I’ve been involved with. It shows how EchoNous is looking to the future and I’m sure other companies will follow suit. I think we’re all celebrating these wins behind the scenes and that spirit of celebration will continue.

Cloud-based interpretation, machine learning interpretation of things like spectral Doppler tracing where it instantly knows the answer rather than tracing around it – that promises great things for the future. Machines like Kosmos with embedded spectral Doppler and automatic interpretation without many more steps offer a lot for the future in terms of workflow and the ability to do things slightly out of reach now.

If you’re interested in experiencing the AI features Dr. Peck mentions above, connect with our sales team to demo Kosmos for yourself.